Scrutinizing and De-Biasing Intuitive Physics with Neural Stethoscopes

Published at BMVC 2019

Authors: Fabian Fuchs, Oliver Groth, Adam Kosiorek, Alex Bewley, Markus Wulfmeier, Andrea Vedaldi, Ingmar Posner

Predicting the stability of block towers is a popular task in the domain of

intuitive physics. Previously, work in this area focused on prediction accuracy, a one-dimensional

performance measure. We provide a broader analysis of the learned physical understanding

of the final model and how the learning process can be guided.

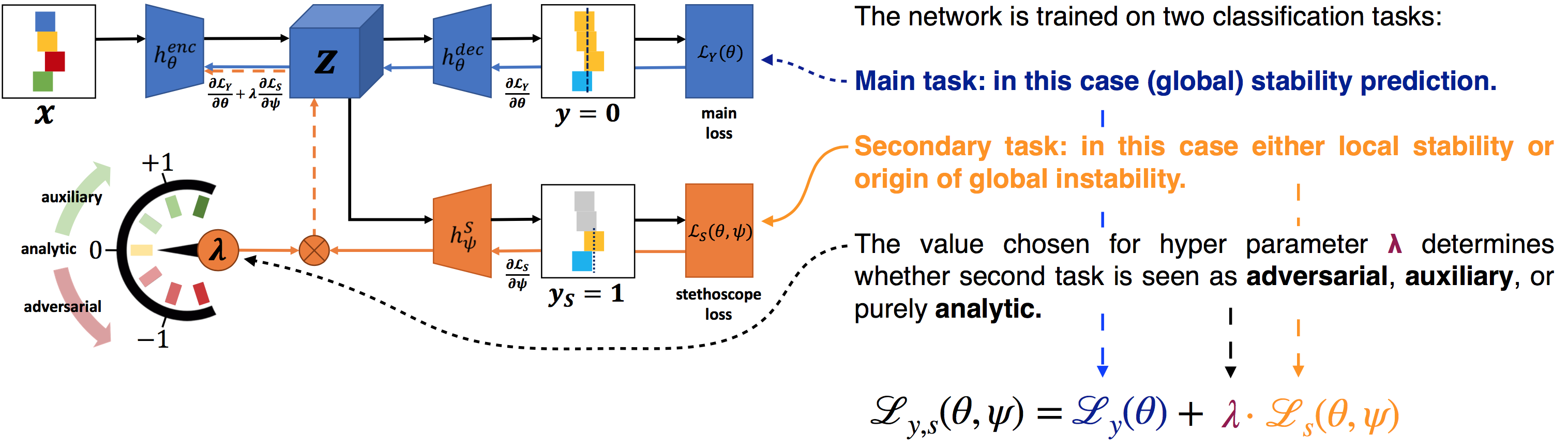

To this end, we introduce neural stethoscopes as a general purpose framework

for quantifying the degree of importance of specific factors of influence in deep

neural networks as well as for actively

promoting and suppressing information as appropriate. In doing so, we unify concepts

from multitask learning as well as training with auxiliary and adversarial losses. We apply

neural stethoscopes to analyse the state-of-the-art neural network for stability prediction.

We show that the baseline model is susceptible to being misled by incorrect visual cues.

This leads to a performance breakdown to the level of random guessing when training on

scenarios where visual cues are inversely correlated with stability. Using stethoscopes to

promote meaningful feature extraction increases performance from 51% to 90% prediction accuracy. Conversely, training on an easy dataset where visual cues are positively

correlated with stability, the baseline model learns a bias leading to poor performance on

a harder dataset. Using an adversarial stethoscope, the network is successfully de-biased,

leading to a performance increase from 66% to 88%.

Paper

- find the paper here

Dataset

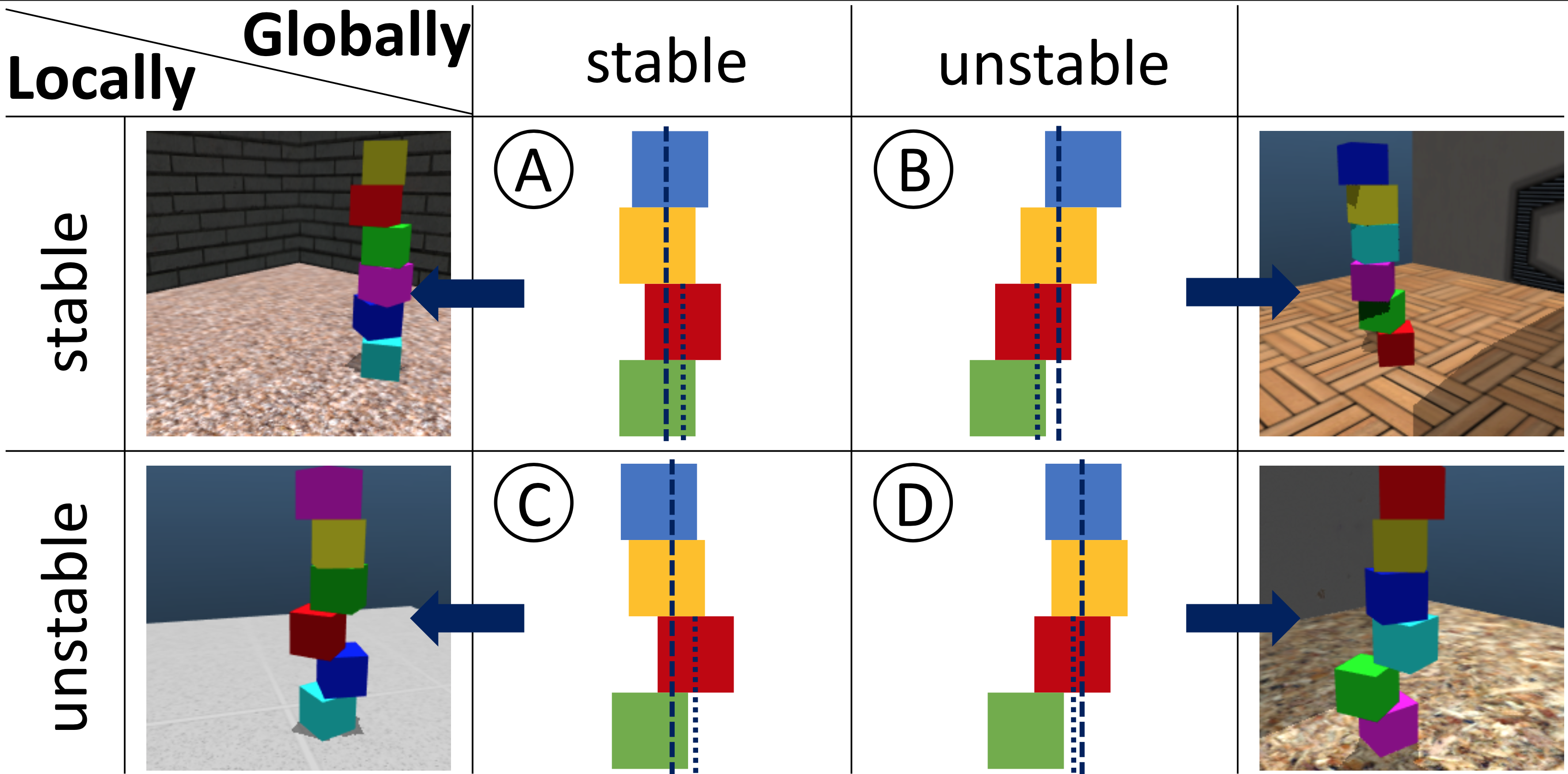

We extended the ShapeStacks dataset to specifically tailor it towards scrutinising biases in the physical reasoning of a trained model. The additional label of local stability allows for investigating to what extent a trained model follows visual cues. In the paper Scrutinising and De-Biasing Intuitive Physics with Neural Stethoscopes, we promote a framework combining adversarial and auxiliary training to promote or suppress extraction of features to guide the learning process.

How to use it

RGB images (7.1 GB):

steth_shapestacks_rgb.tar.gz

steth_shapestacks_rgb.tar.gz.md5

MuJoCo world definitions (optional, 30 MB):

steth_shapestacks_mjcf.tar.gz

steth_shapestacks_mjcf.tar.gz.md5